How do you teach software systems to sense their surroundings? This is the big question that drives the development of perception systems. When engineers set out to build powerful perception solutions, they have to have a firm grasp of state-of-the-art engineering practices. We have a handle on these methods. Count on us to help you develop a perception system that gets the job done for rapid manufacturing, autonomous robots and vehicles tasked to interpret complex situations – or whatever else your use case may entail.

Everything we do revolves around understanding your unique needs and delivering tailored solutions to satisfy those demands. Environment perception, machine vision, sensor fusion, sensor services – we are here to help you with any and all of this.

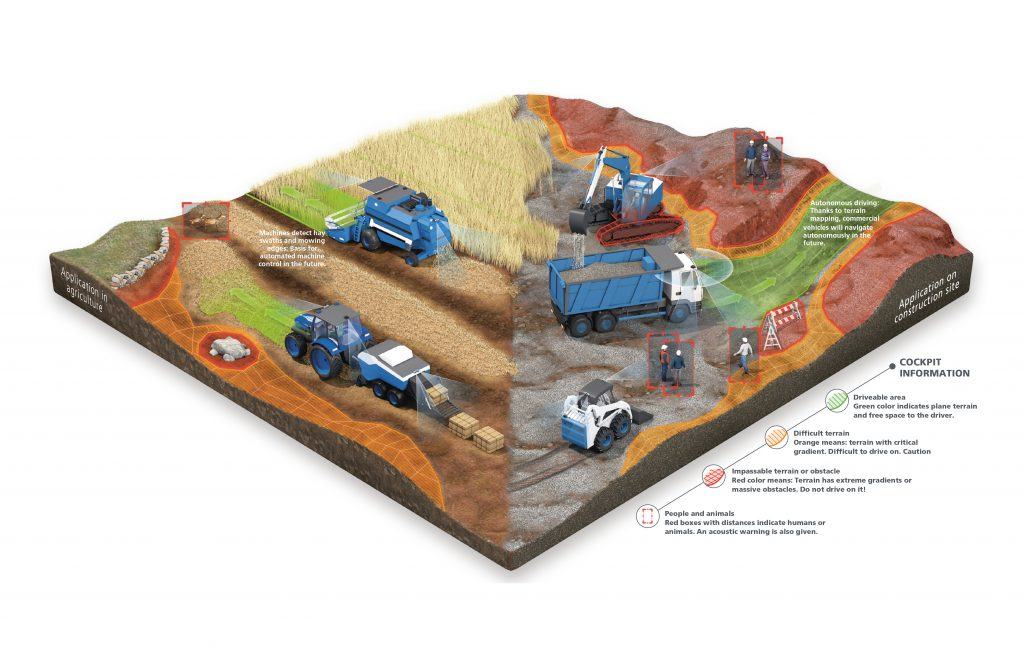

Environment perception is all about using sensors and algorithms to accurately sense and interpret surroundings. With our 3D mapping, localization, object recognition, and tracking tools, we enable excellent planning and navigation for autonomous systems. On top of that, we offer 3DTM, a framework for automated responses to obstacles and surfaces. It also serves to analyze and categorize objects. This makes autonomous systems safer and allows engineers to add on innovative features.

Advanced machine vision systems can boost efficiency in manufacturing. We deliver custom solutions built to meet your needs. Factory virtualization, object recognition, object localization, pick-and-place robot control, 3D reconstruction, fully automated 2D and 3D visual inspection – anything goes. Whatever the job may entail, from executing standard tasks without utmost efficiency to designing, implementing, and testing innovative solutions for highly complex challenges, count on us get the job done. Take advantage of our painstaking attention to detail. Let us team up to analyze and automate production processes to boost their reliability, speed, and cost-efficiency.

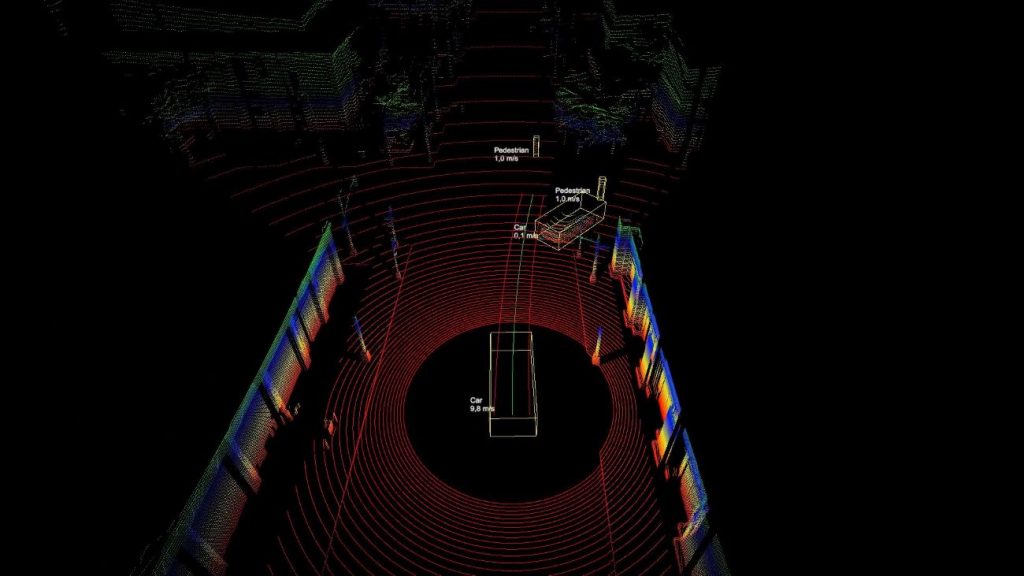

Sensor fusion is all about merging data from multiple sensors to obtain an accurate picture of the environment. Cameras, radar, LiDAR, ultrasound, GPS/IMU, and other instruments serve to take diverse readings.

One sensor’s strengths compensates for another’s weaknesses, so the fused data sourced from these disparate sensors improves accuracy, reliability, and coverage. It is possible to fuse raw data as well as preprocessed information, for example, about detected objects.

Sensor fusion figures prominently in autonomous driving, robotics, surveillance, and medical device use cases.

We provide a wide range of sensor-related services. Come to us if you need help selecting, sourcing, and calibrating the right sensors for the given task. Our engineers know how to integrate these assets into legacy systems as well as collect and analyze data. We are also your go-to source for data processing algorithms for cameras, radar, ultrasound, infrared, LiDAR, and other sensors. And we will be happy to provide custom sensor and environment simulations (iVESS) to support your design efforts.

Dr. Dominik Holling

Data Engineering, AI & Computer Vision